Responsive Characters from Motion Fragments

People

- Jim McCann (Carnegie Mellon University)

- Nancy Pollard (Carnegie Mellon University)

Abstract

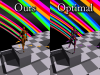

Our prototype in action

In game environments, animated character motion must rapidly adapt to changes in player input -- for example, if a directional signal from the player's gamepad is not incorporated into the character's trajectory immediately, the character may blithely run off a ledge. Traditional schemes for data-driven character animation lack the split-second reactivity required for this direct control; while they can be made to work, motion artifacts will result. We describe an on-line character animation controller that assembles a motion stream from short motion fragments, choosing each fragment based on current player input and the previous fragment. By adding a simple model of player behavior we are able to improve an existing reinforcement learning method for precalculating good fragment choices. We demonstrate the efficacy of our model by comparing the animation selected by our new controller to that selected by existing methods and to the optimal selection, given knowledge of the entire path. This comparison is performed over real-world data collected from a game prototype. Finally, we provide results indicating that occasional low-quality transitions between motion segments are crucial to high-quality on-line motion generation; this is an important result for others crafting animation systems for directly-controlled characters, as it argues against the common practice of transition thresholding.

Citation

James McCann and Nancy S. Pollard. Responsive Characters from Motion Fragments, ACM Transactions on Graphics (SIGGRAPH 2007), August 2007, Vol 26. No. 3. [PDF] [BibTeX]Video

Funding

This research is supported by:

- NSF IIS-0326322

- NSF ECS-0325383

- NSF EIA-0196217 (mocap database)