http://graphics.cs.cmu.edu/projects/discriminativeModeSeeking/

Mid-Level Visual Element Discovery as Discriminative Mode Seeking

People

Abstract

Recent work on mid-level visual representations aims to capture information at the level of complexity higher than typical “visual words”, but lower than full-blown semantic objects. Several approaches have been proposed to discover mid-level visual elements, that are both 1) representative, i.e. frequently occurring within a visual dataset, and 2) visually discriminative. However, the current approaches are rather ad hoc and difficult to analyze and evaluate. In this work, we pose visual element discovery as discriminative mode seeking, drawing connections to the the well-known and well-studied mean-shift algorithm. Given a weakly-labeled image collection, our method discovers visually-coherent patch clusters that are maximally discriminative with respect to the labels. One advantage of our formulation is that it requires only a single pass through the data. We also propose the Purity-Coverage plot as a principled way of experimentally analyzing and evaluating different visual discovery approaches, and compare our method against prior work on the Paris Street View dataset (introduced here). We also evaluate our method on the task of scene classification, demonstrating state-of-the-art performance on the MIT Scene-67 dataset.

Paper & Presentation

NIPS paper (pdf, 1.3MB; updated 12/7/2015 to fix a typo in the appendix)

Example Slides (pptx, 6.4MB)

Citation

Carl Doersch, Abhinav Gupta, and Alexei A. Efros. Mid-Level Visual Element Discovery as Discriminative Mode Seeking. In NIPS 2013.

[Show BibTex]

Related Papers

A. Bansal, A. Shrivastava, C. Doersch, A. Gupta, Mid-level Elements for Object Detection arXiv preprint arXiv:1504.07284 2015 (Uses discriminative mode seeking to obtain near state-of-the-art results on object detection)

A. Bansal, A. Shrivastava, C. Doersch, A. Gupta, Mid-level Elements for Object Detection arXiv preprint arXiv:1504.07284 2015 (Uses discriminative mode seeking to obtain near state-of-the-art results on object detection)

C. Doersch, S. Singh, A. Gupta, J. Sivic, and A. A. Efros. What Makes Paris Look like Paris? ACM Transactions on Graphics (SIGGRAPH 2012), August 2012, vol. 31, No. 3.

S. Singh, A. Gupta and A. A. Efros. 2012. Unsupervised discovery of mid-level discriminative patches. In ECCV 2012.

Code

Note that the code for this project has been modified from the version used in the paper. The qualitative behavior should be the same, but the output may not be identical to the results in the paper. |

Additional Materials

- Full Results: Paris Elements

An example of the results obtained entirely automatically, using our algorithm to find the unique visual elements of Paris. Compare to our previous results on the same data. WARNING: This page contains about 10000 patches; don't click the link unless your browser can handle it! - Visual Elements Learned For Indoor 67 The elements used for our state-of-the-art Indoor 67 classifier.

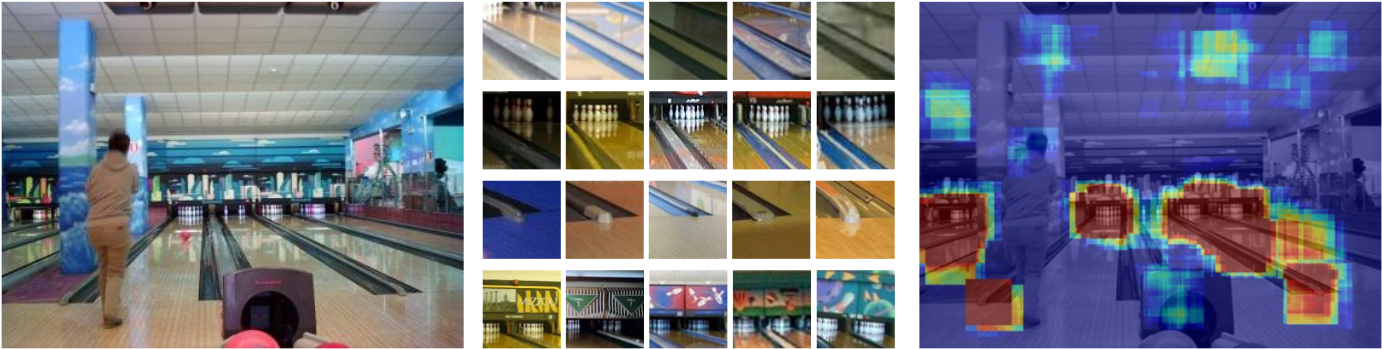

- Heatmaps generated for both confident correctly-classified images on Indoor 67 as well as confident errors.

- Learned element templates and full image feature vectors for indoor67 images (.mat files in .tar.gz): all (3.3GB); elements only (117MB); README describing archive contents.

Funding

This research was supported by:

- NDSEG Fellowship for Carl Doersch

- Amazon Web Services

- ONR N000141010934

- IARPA via the Air Force Research Laboratory (The U.S. Government is authorized to reproduce and distribute reprints for governmental purposes notwithstanding any copyright annotation thereon. Disclaimer: The views and conclusions contained herein are those of the authors and should not be interpreted as necessarily representing the official policies or endorsements, either expressed or implied, of IARPA, AFRL or the U.S. Government.)