Programming Project #3 for the 15-862 and 15-663 class (proj3g)

Programming Project #3 for the 15-862 and 15-663 class (proj3g) 15-463: Computational Photography

Programming Project #3 for the 15-862 and 15-663 class (proj3g)

Programming Project #3 for the 15-862 and 15-663 class (proj3g)

This project explores gradient-domain processing, a simple technique

with a broad set of applications including blending, tone-mapping, and

non-photorealistic rendering. For the core project, we will focus on

"Poisson blending"; tone-mapping and NPR can be investigated as bells

and whistles.

The primary goal of this assignment is to

seamlessly blend an object or texture from a source image into a

target image. The simplest method would be to just copy and paste the

pixels from one image directly into the other. Unfortunately, this

will create very noticeable seams, even if the backgrounds are

well-matched. How can we get rid of these seams without doing too

much perceptual damage to the source region?

The insight is

that people often care much more about the gradient of an image than

the overall intensity. So we can set up the problem as finding values

for the target pixels that maximally preserve the gradient of the

source region without changing any of the background pixels. Note

that we are making a deliberate decision here to ignore the overall

intensity! So a green hat could turn red, but it will still look like

a hat.

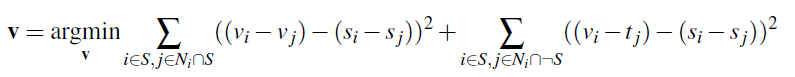

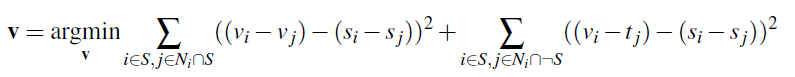

We can formulate our objective as a least squares

problem. Given the pixel intensities of the source image "s" and of

the target image "t", we want to solve for new intensity values "v"

within the source region "S":

Here, each "i" is a pixel in the source region "S",

and each "j" is a 4-neighbor of "i". Each summation guides the

gradient values to match those of the source region. In the first

summation, the gradient is over two variable pixels; in the second,

one pixel is variable and one is in the fixed target region.

The method presented above is called "Poisson blending". Check out the Perez et al. 2003 paper to see sample results, or to wallow in extraneous math. This is just one example of a more general set of gradient-domain processing techniques. The general idea is to create an image by solving for specified pixel intensities and gradients.

As an example, consider this picture of the bear and swimmers being pasted into a pool of water. Let's ignore the bear for a moment and consider the swimmers. In the above notation, the source image "s" is the original image the swimmers were cut out of; that image isn't even shown, because we are only interested in the cut-out of the swimmers, i.e., the region "S". "S" includes the swimmers and a bit of light blue background. You can clearly see the region "S" in the left image, as the cutout blends very poorly into the pool of water. The pool of water, before things were rudely pasted into it, is the target image "t".

As an example, consider this picture of the bear and swimmers being pasted into a pool of water. Let's ignore the bear for a moment and consider the swimmers. In the above notation, the source image "s" is the original image the swimmers were cut out of; that image isn't even shown, because we are only interested in the cut-out of the swimmers, i.e., the region "S". "S" includes the swimmers and a bit of light blue background. You can clearly see the region "S" in the left image, as the cutout blends very poorly into the pool of water. The pool of water, before things were rudely pasted into it, is the target image "t".

The implementation for gradient domain processing is not complicated, but it is easy to make a mistake, so let's start with a toy example. In this example we'll compute the x and y gradients from an image s, then use all the gradients, plus one pixel intensity, to reconstruct an image v.

The implementation for gradient domain processing is not complicated, but it is easy to make a mistake, so let's start with a toy example. In this example we'll compute the x and y gradients from an image s, then use all the gradients, plus one pixel intensity, to reconstruct an image v.

Denote the intensity of the source image at (x, y) as s(x,y) and the values of the image to solve for as v(x,y). For each pixel, then, we have two objectives:

| 1. | minimize ( v(x+1,y)-v(x,y) - (s(x+1,y)-s(x,y)) )^2 | the x-gradients of v should closely match the x-gradients of s |

| 2. | minimize ( v(x,y+1)-v(x,y) - (s(x,y+1)-s(x,y)) )^2 | the y-gradients of v should closely match the y-gradients of s |

| 3. | minimize (v(1,1)-s(1,1))^2 | The top left corners of the two images should be the same color |

Step 1: Select

source and target regions. Select the boundaries of a region in the

source image and specify a location in the target image where it

should be blended. Then, transform (e.g., translate) the source image

so that indices of pixels in the source and target regions correspond.

I've provided starter code

(getMask.m, alignSource.m) to help with this. You may want to augment

the code to allow rotation or resizing into the target region. You

can be a bit sloppy about selecting the source region -- just make

sure that the entire object is contained. Ideally, the background of

the object in the source region and the surrounding area of the target

region will be of similar color.

Step 2: Solve the blending

constraints:

Step 3: Copy the solved values v_i into your target image. For RGB

images, process each channel separately. Show at least three results

of Poisson blending. Explain any failure cases (e.g., weird colors,

blurred boundaries, etc.).

Tips

1. Pre-initialize your sparse matrix with

sparse([], [], [], M, N, nzmax)

for a matrix with M equations and N variables and at most nzmax non-zero entries.

2. For your first blending example, try something that you know should work, such as the included penguins on top of the snow in the hiking image.

3. Object region selection can be done very crudely, with lots of room around the object.

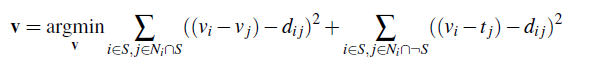

Follow the same steps as Poisson blending, but use the gradient in source or target with the larger magnitude as the guide, rather than the source gradient:

Here "d_ij" is the value of the gradient from the source or the target image with larger magnitude, i.e. if abs(s_i-s_j) > abs(t_i-t_j), then d_ij = s_i-s_j; else d_ij = t_i-t_j. Show at least one result of blending using mixed gradients. One possibility is to blend a picture of writing on a plain background onto another image.

In order to make sure we did not miss any hardwork you made, please explicitly mention all extra work you did in both REAME and Webpage.

Color2Gray (20 pts)

Sometimes, in converting a color image to grayscale (e.g., when printing to a laser printer), we lose the important contrast information, making the image difficult to understand. For example, compare the color version of the image on right with its grayscale version produced by rgb2gray.

Can you do better than rgb2gray? Gradient-domain processing provides one avenue: create a gray image that has similar intensity to the rgb2gray output but has similar contrast to the original RGB image. This is an example of a tone-mapping problem, conceptually similar to that of converting HDR images to RGB displays. To get credit for this, show the grayscale image that you produce (the numbers should be easily readable).

Hint: Try converting the image to HSV space and looking at the gradients in each channel. Then, approach it as a mixed gradients problem where you also want to preserve the grayscale intensity.

More gradient domain processing (up to 20 pts)

Many other applications are possible, including non-photorealistic rendering, edge enhancement, and texture or color transfer. See Perez et al. 2003 or Gradient Shop for further ideas.

Use both words and images to show us what you've done.

Place all code in your code/ directory. Include a README describing the contents of each file.

In the website in your www/ directory, please: