Controllable Visual-Tactile Synthesis

| Ruihan Gao | Wenzhen Yuan | Jun-Yan Zhu |

IEEE International Conference on Computer Vision (ICCV) (2023)

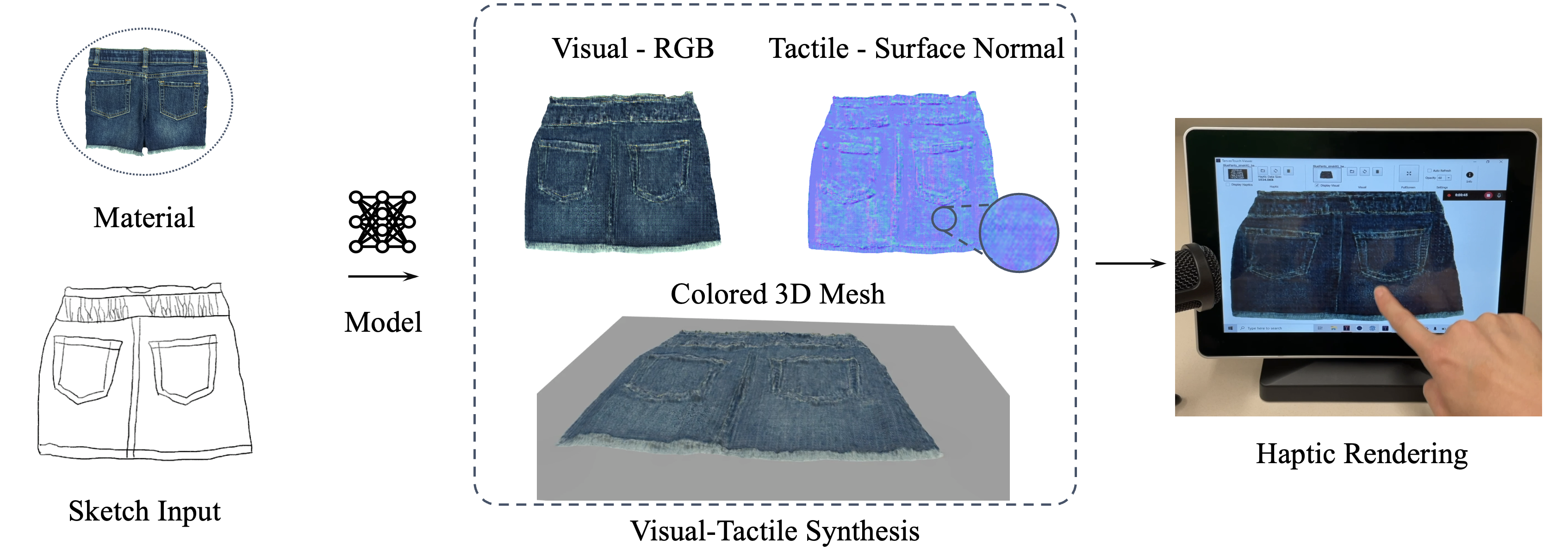

Deep generative models have various content creation applications such as graphic design, e-commerce, and virtual Try-on. However, current works mainly focus on synthesizing realistic visual outputs, often ignoring other sensory modalities, such as touch, which limits physical interaction with users. In this work, we leverage deep generative models to create a multi-sensory experience where users can touch and see the synthesized object when sliding their fingers on a haptic surface. The main challenges lie in the significant scale discrepancy between vision and touch sensing and the lack of explicit mapping from touch sensing data to a haptic rendering device. To bridge this gap, we collect highresolution tactile data with a GelSight sensor and create a new visuotactile clothing dataset. We then develop a conditional generative model that synthesizes both visual and tactile outputs from a single sketch. We evaluate our method regarding image quality and tactile rendering accuracy. Finally, we introduce a pipeline to render high-quality visual and tactile outputs on an electroadhesion-based haptic device for an immersive experience, allowing for challenging materials and editable sketch inputs.

Ruihan Gao, Wenzhen Yuan, Jun-Yan Zhu (2023). Controllable Visual-Tactile Synthesis. IEEE International Conference on Computer Vision (ICCV).

@article{gao2023controllable,

title = {Controllable Visual-Tactile Synthesis},

author = {Ruihan Gao and Wenzhen Yuan and Jun-Yan Zhu},

booktitle = {IEEE International Conference on Computer Vision (ICCV)},

year = {2023},

}